Enterprise-Grade Agentic RAG

That Has to Be Right

Turn complex, unstructured data into reliable, auditable answers - without building fragile retrieval infrastructure in-house.

- Ingest any document, format, or data source

- Multi-pass, context-aware retrieval without brittle vector search

- Built for compliance, scale, and production reliability

Why Most RAG Systems Fail in Production

Most RAG implementations work in demos and break when they encounter real-world data, real-world scale, and real-world stakes.

"When the data matters, retrieval failure is product failure."

Built for Real-World Data Complexity

Not another wrapper around vector search. Thalamus is purpose-built retrieval infrastructure.

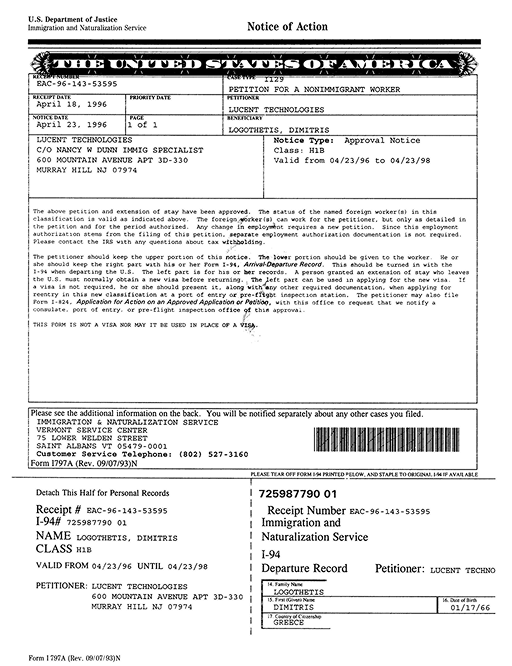

Native Multimodal Ingestion

Images, video, and unstructured PDFs are first-class citizens. Thalamus ingests and understands rich media natively so your pipeline doesn't break when real-world data shows up.

Agentic Retrieval Orchestration

A multi-pass reasoning layer that autonomously routes queries, identifies knowledge gaps in realtime, and synthesizes answers across sources - not just similarity search.

Traceable & Verifiable Outputs

Every answer is citation-backed and auditable end-to-end. Built to reduce liability and foster trust in regulated workflows.

Enterprise Security

Your data stays yours. Tenant isolation, encrypted storage at rest, TLS in transit, and compliance-ready architecture from the ground up.

Built for Teams Working With High-Value Documents

Purpose-built for the industries where document accuracy is non-negotiable.

- Ship AI search, chat, and document analysis without building RAG from scratch

- Scale ingestion and retrieval as your customer base grows

- Focus engineering on product differentiation, not infrastructure

- Surface critical clauses instantly across thousands of contracts

- Reduce review time without missing risk

- Power AI assistants your clients can actually trust

- Retrieve precise answers across filings, reports, and regulatory docs

- Maintain audit trails for every AI-generated response

- Avoid hallucinations in regulated workflows

Build Your Own Retrieval, or Ship Faster With Thalamus

The numbers come from actual client TCO analysis.

Most teams don't need to build RAG. They need reliable retrieval.

How Thalamus Fits Into Your Stack

Four stages from raw documents to grounded answers.

Ingest

Connect your document sources. Thalamus normalizes and processes any format.

Enrich

Documents are parsed, structured, and enriched with metadata. Context preserved.

Retrieve

Agentic retrieval with multi-pass reasoning and citation-backed answers.

Deliver

Clean, grounded context delivered to your LLM, application, or UI.

If Your AI Relies on Documents,

Retrieval Is Key

Let's have a conversation about your architecture, and see if Thalamus is a fit.

Request a Demo

Trusted by teams building AI in document-heavy, high-stakes environments

Built by a team with 10+ years in enterprise-scale retrieval and information extraction patents.